MLOps for Enterprise AI

There was a time when building machine learning (ML) models and taking them to production was a challenge. There were challenges with sourcing and storing quality data, unstable models, dependency on IT systems, finding the right talent with a mix of Artificial Intelligence Markup Language (AIML) and IT skills, and much more. However, times have changed. Though some of these issues still exist, there has been an increase in the use of ML models amongst enterprises.

Organizations have started to see the benefits of ML models, and they continue their investments to bridge the gap and grow the use of AIML. Nevertheless, the growth of ML models in production leads to new challenges like how to manage and maintain the ML assets and monitor the models. Since 2019, there has been a surge in incorporating machine learning models into organizations, and MLOps has started to emerge as a new trending keyword. Although, it’s not just a trend; it’s a necessary element in the complete AIML ecosystem.

Advantages of MLOps

A simple Google search on MLOps will give you a variety of definitions, which is due to the fact that it’s an emerging field and everyone has their own thoughts on what it encompasses. Similarly, MLOps is all about supporting the AIML ecosystem to manage the model lifecycle through automation, producing reliable and quality results consistently without performance degradation, and ensuring scalability of the AIML products.

The primary goal of MLOps is to maximize our ML model performance, increase agility in model development, and improve ROI. However, it is not easy to achieve. A complete automated MLOps solution has various components that provide power to this engine. These components include:

- Automated ML model building pipelines: Similar to the concept of continuous integration and continuous delivery (CI/CD) pipelines in DevOps, we try to set up ML pipelines to continuously build, update, and make the models ready to go into production accurately and seamlessly.

- Model serving: This is one of the most critical components, which provides a way to deploy the models in a scalable and efficient way so that the model users can continue using the ML model results without loss of service.

- Model version control: This is an important step in an AIML workflow, which enables tracking the code changes, maintaining data history, and enabling collaboration between teams. This brings scalability in providing agility to experiments and reproducing experimentation results whenever required.

- Model/data monitoring: Another critical component that helps measure the key performance indicators (KPIs) related to ML model health and data quality.

- Security and governance: This is a very important step to ensure access controls to ML model results and to track activities to minimize the risk associated with the consumption of results by an unintended audience and bad actors. Nowadays, data is the real asset, and ML provides guidance based on this data. Therefore, it is essential to protect and manage the results, so they stay only with the intended audience.

MLOps Maturity Assessments

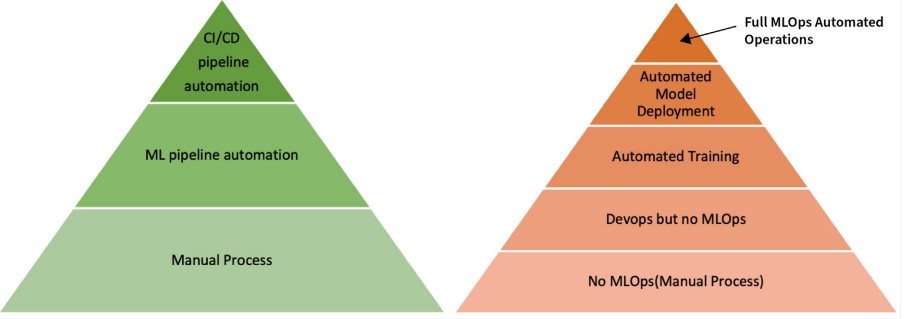

It is highly unlikely for someone to have all these components automated together in one go, and it is also unrealistic to think that this can be achieved within a short period. To track the progress and measure the level of automation achieved using MLOps, Google and Microsoft came up with maturity models.

Google’s maturity model consists of three levels:

- Level 0: Manual process

- Level 1: ML pipeline automation

- Level 2: CI/CD pipeline automation

Similarly, Microsoft’s maturity model consists of five levels:

- Level 0: No MLOps

- Level 1: DevOps but no MLOps

- Level 2: Automated Training

- Level 3: Automated Model Deployment

- Level 4: Full MLOps Automated Operations

Figure 1: Comparing Google's and Microsoft's maturity models

Personally, I feel Microsoft’s maturity model provides a better way to track the MLOps in an enterprise, as it is more detailed and the stages go beyond just CI/CD pipeline automation, which is just one of the components of a fully automated MLOps function. A fully automated MLOps system includes components related to automated model monitoring, prescribed improvement areas, and a goal for zero-downtime systems with reliable results each and every time.

Until now, we have discussed the various components in an MLOps system and the maturity levels, which can provide us with a benchmark on where we are in the MLOps transformation journey. The next question is: Are there any tools available in the market to help in this MLOps transformation journey? My answer would be: “Many.” But there is no one-size-fits-all solution.

The following are some of the open-source tools that are considered to be a part of the MLOps ecosystem:

1. MLFlow: This is an open-source MLOps platform that can be used for managing an end-to-end ML lifecycle. It allows you to track the model experiments, manage and deploy ML models, package your code to reusable components, manage the ML registry, and host results as REST endpoints. You can get more information from their GitHub page.

2. Evidently AI: This is an open-source tool used to analyze and monitor ML models. They have features that allow us to monitor model health, data quality, and analyze data using various interactive visualizations.

3. DVC: This is an open-source version control system for ML projects. It helps with ML experiment management, project version control, collaboration, and makes the models shareable and reproducible. Built on top of the widely famous version control tool GitHub, it is designed to overcome some of the challenges very specific to ML model development, such as handling large data files and tracking ML model codes and metrics.

Apart from these, if you are ready to invest money, you can go for some packaged MLOps tools provided by Fiddler, DataRobot, Arize.ai, Comet, and many more.

Bottom Line

Which tool to use depends only on you! We are in the early phases of the MLOps evolution, and the process is only going to mature in the coming years. Everyone’s problem is unique. Some want to deploy models quickly in production, some want an efficient way to secure and govern ML models, and others might require help with model serving and monitoring. We need to decide our top priority, and then start the transformation process, which will provide a quick path to realize value and ROI.

We ZippyOPS, Provide consulting, implementation, and management services on DevOps, DevSecOps, Cloud, Automated Ops, Microservices, Infrastructure, and Security

Services offered by us: https://www.zippyops.com/services

Our Products: https://www.zippyops.com/products

Our Solutions: https://www.zippyops.com/solutions

For Demo, videos check out YouTube Playlist: https://www.youtube.com/watch?v=4FYvPooN_Tg&list=PLCJ3JpanNyCfXlHahZhYgJH9-rV6ouPro

Relevant Blogs:

Recent Comments

No comments

Leave a Comment

We will be happy to hear what you think about this post